[Across several recent essays in 2026, I’ve been exploring what remains uniquely human in an age of AI. This is a companion piece — less essay, more a field report -- that shares what is emerging as we practically try to build AI that disappears.]

Your body knows where your arm is. You do not have to look. Even in the dark, even asleep, something in you tracks the position of every limb — a silent, continuous awareness that physiology calls proprioception. Raise your hand and you know you are the one raising it. No one has to tell you.

Thought has no such ability.

The physicist David Bohm spent his last decades on this problem. He had worked on quantum mechanics with Einstein and on plasma physics with Oppenheimer. But in the end, the thing that consumed him was simpler and more devastating: human beings can track the position of their elbows but not the movement of their own thinking. We do not notice when our attitude toward a stranger has been shaped by someone else who merely resembles her. We do not notice when our certainty is a reflex rather than an insight. And because thought conceals its own mechanics — because the instrument cannot observe itself in motion — we mistake the map for the territory and then go to war over the borders.

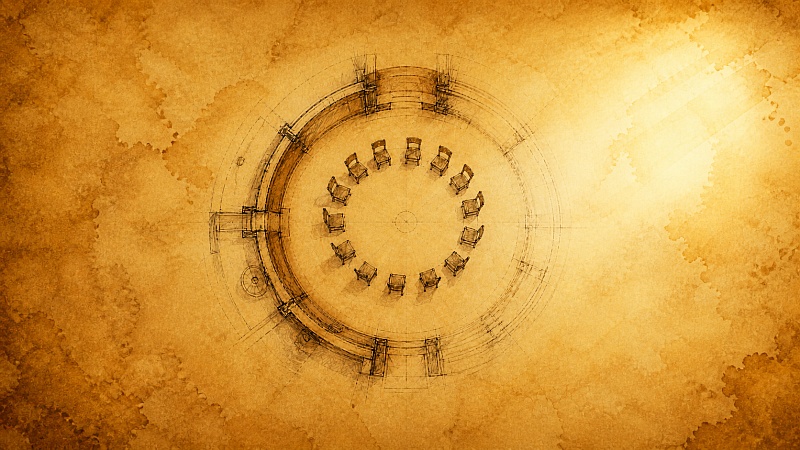

Bohm’s proposal was not a technology. It was a room. Twenty to forty people, seated in a circle, with no agenda, no leader, and no goal other than to watch their own thinking while it is happening. He called the practice Dialogue. And the thing he said it could produce, under the right conditions, was collective proprioception — a group becoming aware of how its own thought is moving, the way your body knows when you raise your arm.

He described the room. He described the principles. He described the frustration that would come first and the coherence that would come later. What he could not solve was a practical problem: how do you find the twenty people? How do you sustain the room across geography and time? How do you train the person who holds the space — and then, as Bohm himself insisted, how do you make that person redundant as quickly as possible?

He died in 1992, having drawn the blueprint for a room the world did not yet know how to build at scale.

For thirty years, we have been building the rooms by hand.

Every Wednesday evening, a door opens in Santa Clara. An hour of silence. A circle of sharing. A meal cooked by my parents — fifty thousand, give or take, served to friends and strangers in our living room. No curriculum. No fees. No teachers. Just whoever shows up, sitting together in the quiet.

There are now similar rooms in over a hundred cities. Some have been meeting weekly for a decade. Some started last month. The format is simple: silence, a reading, a circle, a meal. What Bohm described in theory, these rooms have been practicing in fact — without knowing his name, without referencing his papers, without any technology more advanced than a stove.

The challenge was always the same one Bohm could not solve: the rooms are handmade. Each one requires a host who understands the difference between leading and tending. Each one depends on physical proximity — you have to live near the living room. And each one grows at the speed of relationship, which is the speed of trust, which is the speed of walking.

So the question: can technology help build more rooms without breaking what makes them work?

We recently tried.

Fifty people from a pod — a week-long journey where a thousand strangers from fifty-four countries read the same teachings, reflected daily, and tended each other’s words. Behind the scenes, forty-one volunteers had given over a thousand hours to hold that week: reading every reflection, leaving comments designed not to evaluate but to notice goodness, writing 823 personal replies. The field was already alive before any algorithm touched it.

From that field, in January, an AI we call the Circle Agent sorted fifty podmates into six small groups of four to six. Not by surface demographics, but by layered gradients of resonance that build pathways from me to we to us.

The AI had read their reflections — hundreds of them — and noticed patterns that an individual couldn't see from inside their own writing. It matched people less on “you both live in California” and more on “you are both holding questions about the boundary between surrender and avoidance.” Each circle was given a theme drawn from their own words, a volunteer anchor, and three weekly calls. Then the AI stepped back.

We called them Metta Circles. What happened surprised us.

In Montana, JT — a woman whose deepest language has always been with animals — walked into her first call as anchor. Her natural gift is vulnerability; she opens doors by being the first one through. But the circle asked something different. Recede. Hold. Let the field do the work. She described the inner negotiation: “I could feel my own internal dialogue — should I do the ServiceSpace way or the JT way?” She chose her way, then watched it bend toward something neither she nor the method had predicted. By the third call, when she announced it was the last, everyone unmuted at once.

“No floaties,” she wrote afterward. “Go to the deep end. We’re good.”

In Michigan, a nuclear physicist named Ray told his circle about visiting the defense company where he used to work. He was bringing students. Among the autonomous drones and weapons systems, something cracked open in him — a spiritual reckoning with what he had once helped build. He began to cry. A twelve-year-old put an arm around him. “It’s okay to cry, Mr. Kaufman.”

No AI produced that moment. But AI had placed Ray in a room with four strangers who could hold it.

In Los Angeles, an anchor named Song struggled with the AI-generated summary of her first call. The words were beautiful. Lyrical, even. And they did not feel like hers.

“I felt bullied by AI a bit — this kind of sense of, ‘I know better than you.’ And seductive, too, because the words are so lyrical and beautiful. I really felt seduced into trying to be something other than myself.”

She paused.

“And I just said, you know what, I’m gonna be my sometimes inarticulate, word-salad self. But my heart is there.”

That is collective proprioception. Not the AI producing it — but a human noticing the subtle way a technology was reshaping her posture, and choosing to stay in her own body instead. Bohm would have recognized it instantly.

And then there was the thing we did not expect.

With participants’ consent, the calls were recorded so anchors could receive summaries. That part was planned. What was unplanned was what the AI began to notice in the transcripts. It could detect, with surprising precision, the duration of each person’s sharing. Whether someone had been interrupted. Whether the anchor had drifted from peer mode into teaching mode. Whether the emotional arc of the call was deepening or flattening. The overall sentiment — not as a score, but as a shape.

One anchor used it to notice that a participant had been consistently cut short. Another realized she was unconsciously steering the group toward resolution when the real work was in the not-knowing. Those are genuine gifts — reflections a host might not catch from inside the room, offered back gently after the fact.

But there is something uncomfortable about it, too. A room where people practice radical trust, recorded and analyzed by a machine they cannot see. Song had already named the feeling: seductive and bullying at once. The summaries were lyrical, insightful — and they were not hers. A third anchor, Michael, a retired educator, simply ignored the AI’s suggestions and brought poems instead. Later he put it more precisely: “What I wrote from my own scribbles was more heart-aligned than what the AI produced — even though I think the AI may have noticed some things I didn’t pick up.” His verdict after three calls: “The technology is great for creating circles that might work. And then the circle magic can take over.”

We do not have this figured out. When to mirror and when to stay silent. How much to surface and how much to leave in the room. A technology that can see what the host cannot is also a technology that can reshape what the room is willing to say. The tension is real, and we are sitting in it — which may be the only honest place to sit.

A participant named Melanie asked the question that mirrored what we were holding: “Did we, together, generate our group coherence — or did that result from the AI selection factor?”

The answer, of course, is yes. But not evenly. Before the algorithm matched anyone, a thousand strangers had already spent a week reading, reflecting, and holding each other’s words with care. Forty-one volunteers had tended that field in silence. The pod had done what pods do: build the soil in which trust becomes possible. The AI did not create the coherence. It found people in whom coherence was already stirring, and gave them a room small enough to hear each other.

This is easy to miss — and important not to. The temptation is to credit the matching, the algorithm, the pattern recognition — because those are legible. What is not legible is the fifteen years of practice that preceded the Salt March. Gandhi walked to the sea with seventy-eight people who had been practicing together, daily, for years. The march looked spontaneous. It was not. The field had been built long before anyone started walking.

We are not a corporation, racing to optimize the status quo at scale for short-term impact. We are a small community that has been building rooms by hand for a far more infinite timeline — and we are now asking, with genuine uncertainty, what happens when you hand a trowel to a machine.

The experiments are under way. (Latest: Socrates) However, the answers are not.

In 1656, the Dutch physicist Christiaan Huygens hung two pendulum clocks from a wooden beam across some chairs. Within half an hour, the pendulums synchronized. He disturbed them. They re-synchronized. He blocked air currents between them. They still synchronized.

The coupling was through the beam. The beam did not oscillate. It did not keep time. It merely transmitted — vibrations too subtle for the eye, traveling through wood, connecting two things that could not have found each other on their own.

Most AI is designed to be the clock. It keeps time. It gives answers. You ask; it responds.

We are building technology designed to be the beam. The best technology doesn't become the center of attention. It helps humans find each other’s rhythm — and then disappears.

At the beginning, the technology is dense: reading a thousand reflections, surfacing questions nobody asked, matching resonances across fifty-four countries, mirroring the emotional weather of a group back to itself. A constellation of volunteers tends the human dimension no algorithm touches.

But at every threshold, the technology hands authority back to the human heart, in a way that allows it to pay it forward to an even larger field. The AI proposes circles; a human anchor holds them. The AI summarizes a call; the anchor may use it, ignore it, or bring a poem instead. After the circles, some people want to meet in person — and the technology becomes a signup form. After that, a living room in Santa Clara. Or Surat. Or Ho Chi Minh City. Or a town square in North Carolina where a woman has sat every Wednesday for eight years with a sign around her neck that reads Love. Thousands of such gatherings have taken place now, across more than a hundred cities. No technology at all. Just a door that opens. Just a meal. Just silence.

And beyond even these: a retreat. Three to ten days. No phones. No agenda beyond three words — Me, We, Us — which are not stages to complete but nested dimensions of being human. Over a hundred of these have been held, across countries and continents — Vietnam, Austria, Japan, India, the United States. At the closing circle of our recent Europe retreat, an English man directed his kind gaze towards a Colombian woman with whom he had not exchanged a single word: “What is it about the space that we have all co-created here that I feel comfortable sharing my deepest held secrets with a complete stranger?” That is not a sentence any technology produces. It is a sentence that emerges when people sit together long enough for even language to become unnecessary.

And the room does not end in the room. Coherence, once generated, does not stay where it was made. It moves outbound — into gestures no one planned, toward people no one anticipated.

At that same retreat in Austria, a participant heard a social worker describe his work with homeless people in Vienna. Without being asked, he offered his sleeping bag. Weeks later, on a freezing night, a woman rang the doorbell of a shelter that was full. There were no beds. But there was a sleeping bag — one that had traveled hundreds of kilometers from a small village, given by a man who would never know her name. The social worker told her the story, and she asked him to say thank you. The shortest distance, he wrote, is between two hearts.

By the time people are in a living room, the AI is a faint memory. By the time they are on retreat, it is no memory at all. By the time a sleeping bag reaches a stranger on a freezing night, the beam has long since been forgotten. The clocks are synchronized. And the ripple has outrun its source.

AI does not replace the room. It lowers the cost of building the room — and then walks out of it.

But building the room requires three things that almost never converge.

You need someone who can build a system that reads a thousand reflections and finds five people who share an unnamed question. You need someone who can cook a meal that changes a stranger’s week. And you need someone who understands — not theoretically but in their body — that a group in coherence accesses something no individual mind can reach alone.

Silicon Valley has the first. Community organizers have the second. Contemplative traditions have the third. They rarely speak the same language. The coder optimizes for metrics the silence resists. The community builder cannot find, across fifty-four countries, the four strangers whose questions rhyme. The contemplative practitioner knows how to sit in silence, not how to query a database — and may not wish to.

In these circles, all three converged. The technology found the room. The community held it. And the wisdom — the kind that arrives when you stop reaching for it — came through the silence that no one had to engineer.

Kris, from Columbus, wrote afterward: “My new favorite thing about AI is that it brought me into this circle.”

That sentence holds the entire thesis. The AI is not the gift. The circle is the gift. But without the AI, Kris and Song and Ray and Bob would never have found each other — a hospice volunteer in Los Angeles, a teacher in Pontiac, a retired engineer in Mississauga, and a woman in Columbus who grows pawpaw trees in her basement and teaches social justice to college students.

After the pilot, many circles independently asked to keep meeting — and did. The AI did its work, and the humans took it from there.

Song offered the phrase that stayed with all of us: AI belongs in the back pocket, not the front pocket.

Bohm imagined a room where thought could observe itself. We are building the hallway that leads to the room. The hallway is made of silicon and data and pattern recognition. The room is made of silence and strangers and a meal someone cooked because they wanted to.

The technology fades. The room remains.

And what happens in the room — Bohm knew this, and we are learning it — is the one thing that no technology can produce, purchase, or replace: the moment a group of human beings, attending to each other with their full presence, begins to think together in a way that none of them could think alone.

Collective proprioception. The field, knowing where its arm is. And raising it.

Share a Reflection

Your thoughts will be shared with the author